- HyMEM retrieves diverse strategy memory instead of repetitive actions.

- The agent adapts from initial search to constraint-focused filtering.

- Task is completed within step budget with stable long-horizon planning.

CoMEM: integrating multimodal and multilingual knowledge into VLMs using compact continuous memory.

NeurIPS 2025GUI agents with scalable continuous memory for unfamiliar interfaces and long-horizon tasks.

arXiv 2025Project page for Planner Matters with framework, results, and demo materials.

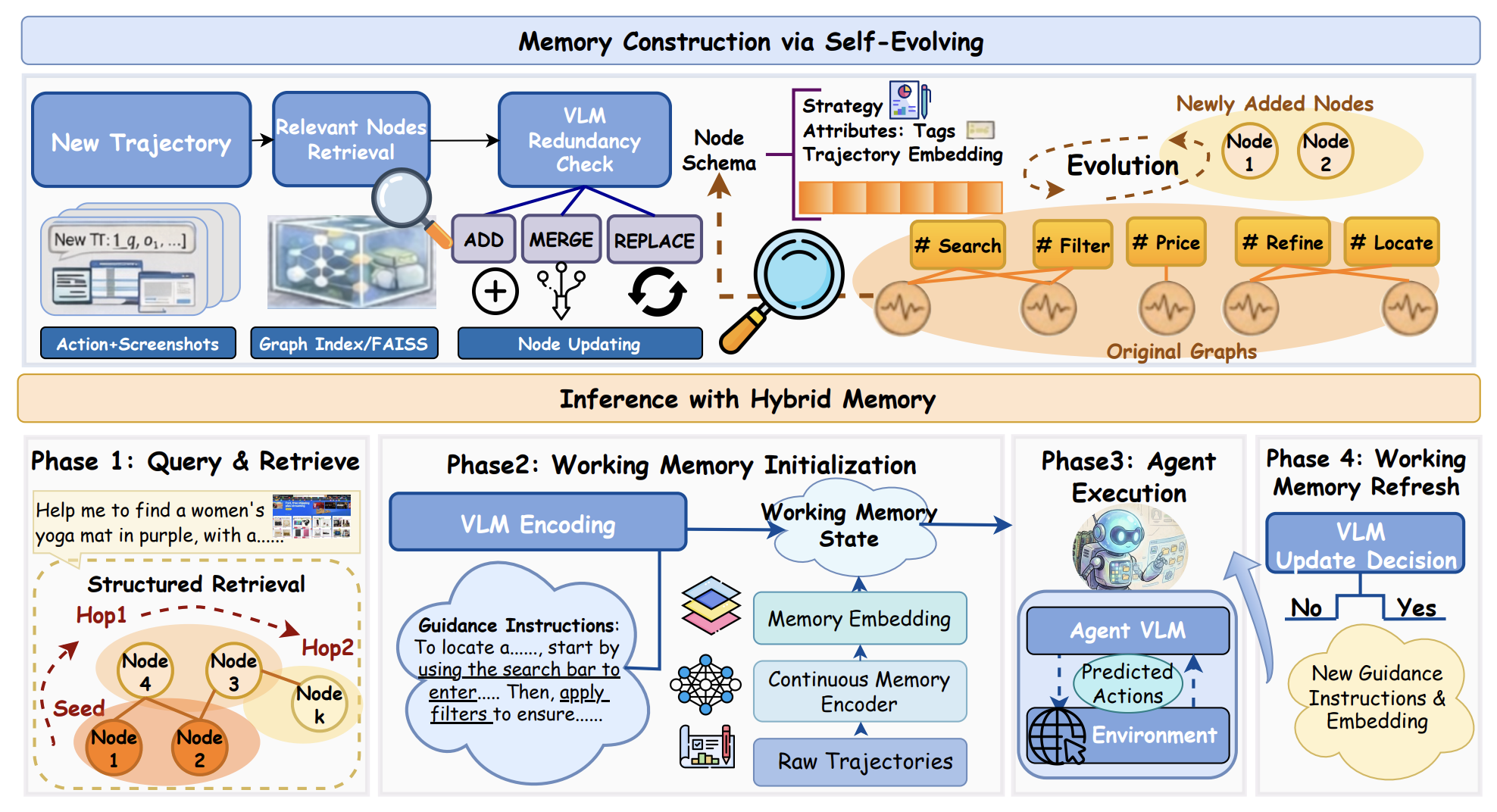

Project PageHybrid memory graph with discrete strategy nodes and continuous trajectory embeddings.

The remarkable progress of vision-language models (VLMs) has enabled GUI agents to interact with computers in a human-like manner. Yet real-world computer-use tasks remain difficult due to long-horizon workflows, diverse interfaces, and frequent intermediate errors. HyMEM introduces a graph-based hybrid memory that couples discrete high-level symbolic strategies with continuous trajectory embeddings, supporting multi-hop retrieval, self-evolution via structured updates, and on-the-fly working-memory refresh during inference.

Hugging Face deployment: put your demo video at assets/hymem_demo.mov.

Task success rates (%) across WebVoyager, Mind2Web, and MMInA.

| Backbone | Model / Method | WebVoyager | Mind2Web | MMInA | Overall | ||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Amz | Cour | Recp | Map | Info | Svc | Ent | Trav | Wiki | |||

| Closed-Source | |||||||||||

| GPT-4o | 24.4 | 7.1 | 13.3 | 36.6 | 7.8 | 14.1 | 3.4 | 19.4 | 51.0 | 19.7 | |

| Gemini-Pro-Vision | 41.7 | 42.9 | 20.0 | 53.7 | 16.7 | 22.4 | 0.0 | 19.4 | 50.0 | 29.6 | |

| Claude-4 | 63.4 | 28.6 | 33.3 | 70.0 | 25.6 | 40.0 | 6.9 | 9.7 | 52.0 | 36.6 | |

| Open-Source | |||||||||||

| Qwen2.5-VL-32B | 46.3 | 26.2 | 6.7 | 29.3 | 14.1 | 20.0 | 6.9 | 9.7 | 43.0 | 22.5 | |

| CogAgent | 12.2 | 9.5 | 26.7 | 9.8 | 24.4 | 8.2 | 13.8 | 16.1 | 21.0 | 15.7 | |

| Websight | 24.4 | 4.8 | 13.3 | 29.3 | 10.3 | 3.5 | 3.4 | 0.0 | 12.0 | 11.2 | |

| UI-TARS-1.5-7B | Baseline | 31.7 | 16.7 | 20.0 | 31.7 | 6.4 | 4.7 | 6.9 | 0.0 | 36.0 | 17.1 |

| UI-TARS-1.5-7B | + Reasoning Bank | 0.0 | 11.9 | 6.7 | 12.2 | 7.7 | 8.2 | 3.7 | 6.7 | 44.0 | 11.2 |

| UI-TARS-1.5-7B | + AWM | 4.9 | 7.1 | 4.4 | 14.6 | 10.3 | 3.5 | 3.7 | 3.3 | 26.0 | 8.6 |

| UI-TARS-1.5-7B | + Discrete | 19.5 | 16.7 | 8.9 | 17.1 | 11.5 | 8.2 | 7.4 | 3.4 | 46.0 | 15.4 |

| UI-TARS-1.5-7B | + Continuous | 43.9 | 33.3 | 24.4 | 43.9 | 16.7 | 28.2 | 10.3 | 3.2 | 54.0 | 28.7 |

| UI-TARS-1.5-7B | + HyMEM | 58.5 | 28.6 | 26.7 | 53.7 | 16.7 | 25.9 | 10.3 | 6.5 | 54.0 | 31.2 |

| Qwen2.5-VL-7B | Baseline | 14.6 | 2.4 | 15.9 | 16.7 | 9.0 | 11.8 | 0.0 | 4.4 | 38.0 | 12.5 |

| Qwen2.5-VL-7B | + Reasoning Bank | 29.3 | 9.5 | 6.7 | 29.3 | 9.0 | 20.0 | 3.4 | 6.5 | 44.0 | 17.5 |

| Qwen2.5-VL-7B | + AWM | 17.1 | 4.8 | 11.1 | 29.3 | 7.7 | 10.6 | 0.0 | 6.5 | 31.0 | 13.1 |

| Qwen2.5-VL-7B | + Discrete | 22.0 | 21.4 | 8.9 | 31.7 | 9.0 | 12.9 | 10.3 | 3.2 | 34.0 | 17.0 |

| Qwen2.5-VL-7B | + Continuous | 24.4 | 17.1 | 8.9 | 34.1 | 16.7 | 23.5 | 10.3 | 12.9 | 47.0 | 21.7 |

| Qwen2.5-VL-7B | + HyMEM | 63.4 | 54.8 | 20.0 | 53.7 | 17.9 | 23.5 | 3.4 | 22.6 | 56.0 | 35.0 |

| Qwen3-VL-8B | Baseline | 36.6 | 16.7 | 13.3 | 43.9 | 9.0 | 20.0 | 10.3 | 9.7 | 38.0 | 21.9 |

| Qwen3-VL-8B | + Reasoning Bank | 36.6 | 11.9 | 17.8 | 31.7 | 10.3 | 24.7 | 17.2 | 6.5 | 48.0 | 22.7 |

| Qwen3-VL-8B | + AWM | 39.0 | 19.0 | 8.9 | 31.7 | 12.5 | 16.5 | 6.9 | 16.1 | 48.0 | 22.1 |

| Qwen3-VL-8B | + Discrete | 31.7 | 16.7 | 15.6 | 31.7 | 11.5 | 16.5 | 13.8 | 16.1 | 38.0 | 21.3 |

| Qwen3-VL-8B | + Continuous | 43.9 | 19.0 | 17.8 | 34.1 | 12.5 | 17.9 | 3.4 | 19.4 | 42.0 | 23.3 |

| Qwen3-VL-8B | + HyMEM | 46.3 | 19.0 | 26.7 | 39.0 | 17.9 | 20.0 | 6.9 | 25.8 | 47.0 | 27.6 |

@misc{zhu2026hymem,

title={Hybrid Self-evolving Structured Memory for GUI Agents},

author={Sibo Zhu and Wenyi Wu and Kun Zhou and Stephen Wang and Biwei Huang},

year={2026},

note={Manuscript}

}